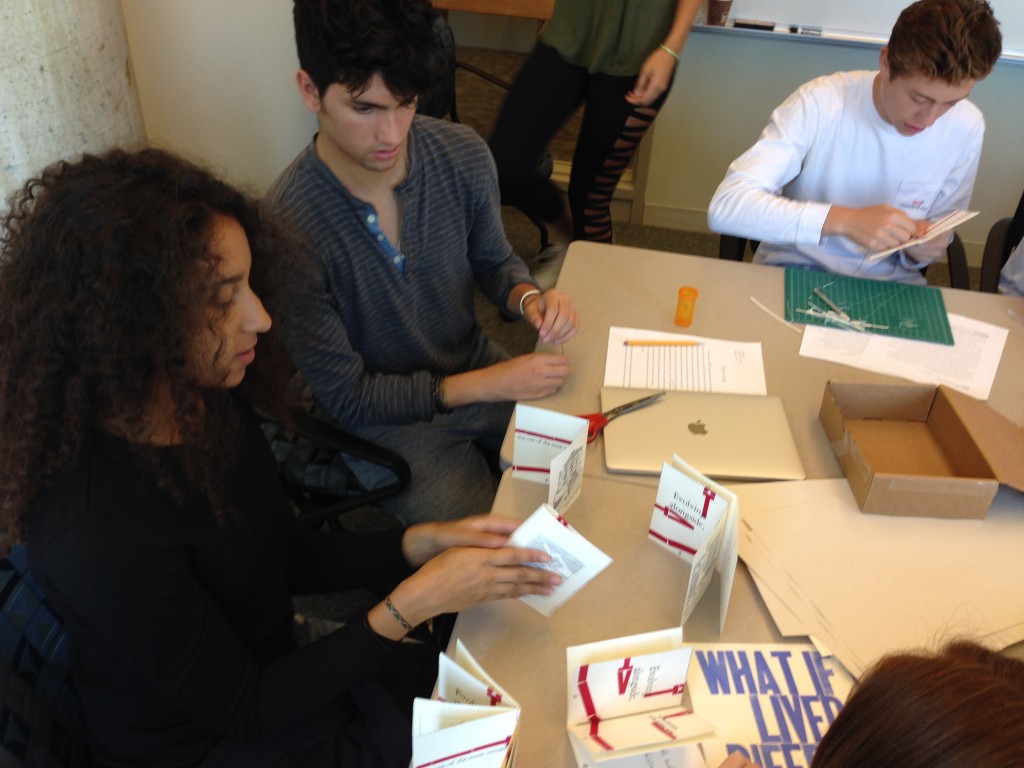

Pictured above: Study participant Jeff Greenwald, Hamilton ’17

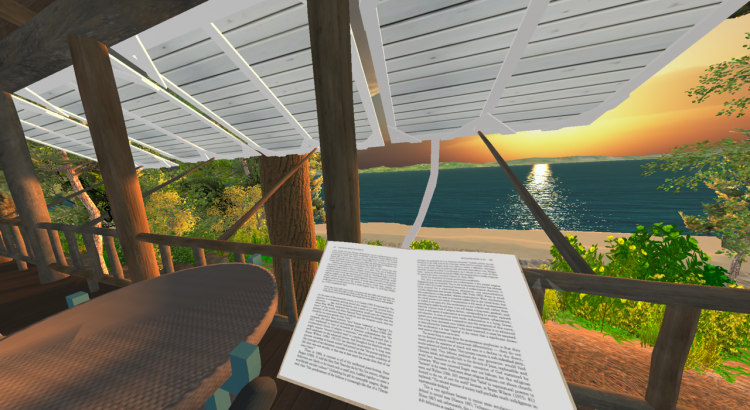

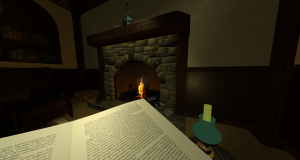

Researchers studying awe in a lab setting can’t take participants to awe-inducing locations like mountaintops, and the standard of watching videos of those situations has limitations. To help solve this problem, Hayley Goodrich ‘17, a Psychology concentrator at Hamilton College, and Educational Technologist Kyle Burnham recently set out to explore the use of Virtual Reality (VR) for Goodrich’s thesis project on the experience of awe.

A vague theoretical connection between awe and meaning exists in the awe literature. According to Goodrich:

awe arises when something in the environment is vast and cannot readily be incorporated into one’s existing meaning frameworks.

Goodrich wanted to explore if awe really did emerge in response to a violation of some meaning-making structure. Studying such a connection necessitated that she first make participants feel awe. Read More